It was not the Hollywood triple-A top-down script thing where you spend months scripting out a game design, the art style, you spend years implementing before you can try it out, and if it sucks, well, you hope it wasn’t your money. It was all about an interactive design. You want to start with a minimum playable design, play it, improve it and repeat until a fun game can evolve – which could be completely different from what you intended.”

Archives For February 28, 2014

GDC has bought with it the exciting news that many companies that make game engines are following in Unity and Game Makers footsteps in an effort to “democratize development” as Unity’s CEO David Helgason puts it. Most are following a subscription model with a variety of price points having been announced. This is great news for developers as access to professional quality features, cross-platform development and increased productivity just got a hell of a lot cheaper. I can get a fully featured game engine with full source for less than I spend on my internet connection per month. That’s pretty crazy.

The main downer I can see at the moment is most companies seem to be competing on cost rather than productivity. Wild eyed developers who see the shiny features and triple-A titles that the engines have powered are already announcing the death of Unity. In truth though the reason you buy an engine off the shelf is to save yourself time and money building, maintaining and improving one of your own. Most games companies are interested in making games not technology. As such cost is only really an issue if you end up spending more money than you save in increased productivity. Even at the indie scale we’re talking about such small number differences (tens of dollars a month) that even a mediocre productivity enhancement in a more expensive product will more than pay for itself many times over. Given the price point differences the cheaper end are really saying to me “look we know our product isn’t great but at least it’s cheap” only the cost saving isn’t radical enough to offset the potential loss in productivity between platforms. For example at this stage Crytek would have to pay me to use their engine over the competition. Their tool is just not as usable, which is not to say it’s a bad engine, because it isn’t, but the UX is just terrible.

Bad UX is a typical problem of internal tools. They are built in an ad-hoc manner to a “just good enough” standard and are often just plain terrible from the perspective of the user. Typically horrors involve choice paralysis, bugs and lack of a well designed workflow that matches users needs. Most companies survive this because they have a huge amount on institutional expertise to guide new users and new projects through the complex maze they inhabit. This knowledge also tends to be tribal, passed on by word of mouth and custom rather than by good documentation. As soon as you can’t rely on this institutional knowledge you are basically screwed and your productivity is going to be very bad until you learn it. This has historically tended to be a big problem facing teams adopting internal technology and is an even bigger issue if you are licensing someone elses technology. The reason Unity currently has far more users than other engine companies who have also had free offerings for some time is simply that their mission has been to empower developers so their product is just easier and better to work with.

I’d also be very skeptical about getting seduced by revenue sharing schemes. 5% gross revenue is an awful lot of money to ask and could well push a developer into making a loss rather than a profit on their game. For example you make a game in one year and sell $100,000 worth of copies. $30,000 of that will go to distribution (e.g. Steam), $5,000 goes straight to Epic (plus the $240 you spent per month) leaving only $65,000 to account for marketing, the creation of the game (you’re going to pay yourself right?) and any profit you want to actually make. For Unity the upfront costs are higher but that 5% cut is gone so rather than costing you $5,240 you are only spending $900. When your margins are slim that’s important.

A Wishlist

So what would I like to see from an ideal commercial game engine?

- A company that puts empowering developers as their first priority. Without this the project is doomed. The entire mission for any new feature should be to make the UX as good as possible within the other constraints. The tools and code should be extensively documented and that documentation should be easy to search and use. Video tutorials and regular education opportunities would be excellent. Further support for the community is a must as very often people will solve each others problems.

- A clean architecture for making games. This essentially means that the process of creating a new game entity should not be unnecessarily convoluted nor should the resulting entity be polluted with lots of unnecessary stuff. I’m very much biased towards engines that make use of an entity-component system these days. The architecture should make inter-entity communication simple and event driven. There should be templates for scenes and entities which provide good support for procedural generation. All the engine code should be well tested, by which I mean it’s covered by unit and integration tests further it should be easy to write and run these tests on game code.

- The engine should be fast. Game developers still want to push hardware particularly on fixed platforms like consoles. This means at the very least the engine needs to be multi-threaded probably with a generic jobs system to make use of the cores that are available on various platforms.

- The engine should be portable. If it can’t build to consoles, web, mobile platforms, Linux and desktop OS’s then that’s a bit of a deal breaker. Not because you necessarily want to deploy to all those platforms but because having the option means you can should your companies plans change. This is important from the perspective of productivity as porting a project can be an awful lot of work especially if you need to learn a new engine to do so!

- Iteration times should be blazing fast. Data should be editable on the fly and it should be ludicrously easy to experiment with ideas. It’s even better if code can be live reloaded, usually from script to avoid the pitfalls of compilation where certain cases will force you to tear down everything.

- It should be easily extensible. An editor you cannot customise is pretty useless. Doubly so if you want to fit it into any sort of Continuous Integration/Deployment or want to implement a more complex build process.

- Integrated. At a minimum the editor should support various forms of source control. Support for other popular developer tools out of the box would be gravy.

- Source access. Big, ambitious projects will almost always need to modify the engine internals.

- Feature rich. This comes last. Shiny features are no good without the above. Unity has amply demonstrated you can have a feature rich editor simply by empowering developers.

Now that these opening salvos in a battle over license cost have been fired we’ll hopefully see a wider war waged on empowering developers with tools that really enhance their productivity.

In my post Cross-Functional People I talked about the value of generalists and how they prevent agile development from getting really screwed up. I thought it would be good to look to the other side. Are there areas of game development where we want deep specialization and more general skills aren’t really required? My instinctual reaction is yes and they’re most valuable in the parts of games development that are better understood or perhaps to put it better less volatile. They are the areas of development where you perhaps wouldn’t have lots of traditional specializations taking part together so don’t have the problems associated with internal team dependencies. For example a lot of rendering or engine engineering doesn’t have much call for anyone other than software engineers if your automated testing is good. Similarly making high-quality production art is not something you need much in the way of engineering to do once pipelines and tools are good.

Or perhaps a better way of thinking about it is that they still need to be cross-functional people but in a more specific domain. Just as being a cross-functional game developer is a different domain to being a cross-functional OS developer. The wider the scope of the domain the team is tackling the wider the scope of skills you need. In this respect it might be more sensible to consider these domains as specific specialized sub-teams who still work in an agile manner but serve as components to the teams with a wider scope. A good engine team empowers a feature team just as having a solid game at the end of pre-production empowers the content creators and artists to fill the game during production. Although I’d argue that many teams would be better learning something new for tasks with small scope rather than having often messy dependencies with other teams or individuals. For example on a small team it’s probably better in the long-term to learn to write shaders than contract that out to someone else.

So in the end I’m arguing for specialization of the team rather than the individual and that results in a different set of requirements for the functions that need to be filled the team members should still be cross-functional. Further this can only really be an issue in large scale development. Small game teams by necessity have to either learn new skills or contract in specializations they lack. This also speaks to a requirement for large teams to be organised differently as they move through the game development lifecycle.

In this post I’m going to continue setting up some ground work to talk about Agile and Games Development by discussing the Game Development Lifecycle. A lifecycle in this context is the identifiable stages of production a project moves through from initial conception through to shipping (or going live in the case of services). I think the games lifecycle is pretty much divorced from the project management methodology you follow. There are simply several stages a game goes through whether they are explicit recognised or not. Much of what I write below is idealised in order to illustrate what I think the stages are and what happens in them. I’ve also deliberately ignored stuff like marketing as I’m no expert and it’s something so dependent on the chosen business model.

Here we have the lifecycle:

A couple of initial things to note off the bat. Firstly there is no scale to this diagram it ultimately captures the process in a fractal manner. As you decompose a game you still need to go through all of these steps for each component of the design. For example if you are adding a new feature to an existing game you still need to work out if it’s a good idea or not so prototyping is only sensible. The second thing to note is that the transitions and where exactly a game is might not be clear in any particular organisation. Some organisations are very formal, have very specific hand-offs and might even abandon work (but keep the game artifacts made). Others, particularly in smaller teams can be very fluid and the move from prototype to shipped product is a series of refactorings of the game. In my opinion almost all games go through these lifecycle stages and quite a lot of project cock ups come from not respecting the limitations of each stage. For example if a project goes through a sudden large pivot in development during some of the latter stages then it’s behooves people to recognize that they will need to drop back in the game development lifecycle for substantial portions of the game to prove it out again.

Moving from left to right through the stages in the diagram should lead to a firming up of the game ideas. It’s an oft said thing that the initial game concept bears only a passing resemblance to the final game and that’s because ideas are just the starting point. Ideas need to be tested and refined, preferably in the crucible of real player experience. This is one reason why putting a massive amount of time into a monolithic design document before anyone starts making anything is a terrible idea. Moving through the lifecycle stages should also firm up knowledge about when the game will be shippable and the scope of work remaining. For a lot of projects this doesn’t happen though and I think a decent Agile approach that takes some ideas from Lean would help approach the lifecycle stages with the right mindset.

Idea

The cornerstone of any project but also the least important part. Ideas are ten-a-penny and it’s the ability to take that idea and build something good from it that is hard. Sometimes the team you have might not be a good fit for making a particular idea or the resources required are unrealistic for what you want to achieve or there is no real market for your idea. There are lots of reasons not to pursue an idea but at this stage many benefit from a little making to gain a better understanding of what might be involved. I’m a firm believer that there is a lot of value in a failed idea and even more value in having a whole bunch of ideas you like in existence as prototypes. Ideas should be given a quick sanity check before moving onto the next stage without getting too involved in details that will likely change further on in the lifecycle anyway.

Prototyping

Lets make a game! In game development it’s the job of a prototype to take the core game ideas and demonstrate that the team is capable of turning them into something fun. This is necessarily messy and exploratory. It’s also an ideal time to stop spending more money on an idea if it seems to be going nowhere. I’ve written at more length on the value of prototyping to both individuals and businesses as well as having spoken about my own experiences. The end result should be an entry in your prototype repository and a decision about whether or not the prototype was deemed a success. Prototypes are also very useful for securing funding to take the game into pre-production as well as validating your game idea by letting real people get their hands on it.

Pre-Production

Pre-Production begins when you take a prototype and decide to make it into a complete game. It’s the process of taking the core game ideas, refactoring them and expanding on them to create a meatier game with the view to nailing down most of the game systems and features. As this progresses the underlying technology is generally refactored as well, to make it technically better and suited to the creation of the large amounts of content many games need. The goal of pre-production is typically to end up in a position where the majority of the work remaining is content creation without large changes to game features. Usually this culminates in a ‘vertical slice’ of the game containing every aspect demonstrated at production quality in a ‘one level’ run through. Making a ‘vertical slice’ also exposes the weaknesses in the technology and content creation pipelines that need to be fixed before the game should be put into production. There should be further validation of the game with lots of real people all the way through this process as the game changes and by this point you should have a good idea of the quality of game you are going to put out.

Production

Production is the point where you get hard to work at cranking out game content which is often the most time consuming part. At this point it can get incredibly expensive to make relatively innocuous seeming changes to the game design. For example altering the attributes that define how a character jumps at a late stage might mean you need to rework all the content in your game. Ouch! Ideally people not concentrating on making content or supporting content creators will be fixing defects, optimizing the game, improving the user experience or ‘juicing it‘. Hopefully it goes without saying that you should be putting all this new content in front of real people all the time to validate that it’s fun and works well with the game pacing (e.g. everyone fails then gives up on stage 3, maybe we should fix that).

Polish

Polishing is the final step that takes a game from being good to great. It’s adding more ‘juice’, improving the user experience, making sure the game pacing is great using feedback gained from playtesting with real people, squashing as many defects as possible and making sure the game runs smoothly on all targeted platforms. A lot of the time this runs in sync with production as some content is often finished before other parts. Although a bit of time at the end of a project dedicated to making the game much more polished can make all the difference. A word of warning though. Games never feel finished to the people working on them. At some point you just have to stop and put it out into the world.

Ship

Champagne or shame this is where the rubber hits the road and your game either crashes and burns or achieves some reasonable commercial success. It’s also the hardest point to reach for all sorts of reasons so it’s quite an achievement to be part of a team to ship something even if it’s not a commercial success.

Cross-functional people AKA T-shaped people or Generalizing-Specialists. The idea that as well as a deep understanding of one subject you want someone with a more general understanding of many others as well. The concept is not new but its quite hard for people to take on board as we live in a culture that raises us to value specialization. Even in education people are pushed further and further into their own little box until a fresh faced person pops out who has “this is my specialty” emblazoned into their mind. The problem with this approach is that it sets up boxes that people are put into based on their label. Even if a team is cross-functional with a mix of the specializations required to build a product people are often trapped in their ‘specialization box’ and this can manifest all sorts of problems:

Slack Time – You have a workflow defined by specializations: The designer will design the stories you have in this iteration, the engineer will build them and then the result will be tested for correctness by a QA person and the defects found fixed. The problem here is that the poor QA person will only get a couple of days to do all of the testing for any artifacts created in an iteration and will have spent the rest of his time not working on the things the team will be doing. Likewise the designer will have to be done early in an iteration in order for there to be development and testing time. The engineer is an even worse position as they will be twiddling their thumbs at the start and end with an unknown amount of work to cram in right at the end to fix any defects. The team is not really working as a team but as an assembly line. In fact it’s just the old-school silo by specialization approach in miniature. Working in time-boxed iterations and being co-located have lots of advantages but now people have to scramble about for things to do to fit into the awkward gaps. Worse still planning each iteration is a nightmare of trying to fit together tasks given the variable length of time design, development and testing may take. This leads to a tendency for each iteration to bleed into the next as estimation goes awry. We could just make the iterations longer but that makes us less able to respond to changes and increases slack time. Often this leads to a naive fix:

Working Off Cadence – Brilliant! We know we’re a waterfall production line in reality so we’ll get the designer to make a design in an iteration. Then the engineer can build it in the next. Finally the QA person can test the end result and the engineer can fix any defects in the final iteration. Now we can all work in parallel at different points in the cycle! Only now we’re even less of a team than we were before! Plus each of us will have to context switch all the time to deal with team members in earlier and later iterations. We’re also now taking three iterations to make anything so after the first three iterations we will be constantly delivering things at the old rate but there will be three times as long between starting something and receiving any feedback on it. In that time we may have designed new systems that take advantage of things in mid-flow only to have to rework both things when feedback comes in. Clearly this system is better at delivering a constant stream of things provided we don’t make any mistakes. However the cost of mistakes, people going on holiday or getting ill just increased a lot! We’re also setting ourselves up to make mistakes by dividing the attention of everyone between several different things in flow. This doesn’t sound good at all!

The answer to this problem is to let people break out of their box and collaborate to make things. Specialists become facilitators and mentors to the team in their area of expertise and the team actually works together to design, build and test the product. The risk of mistakes are lowered as the team is bringing their expertise to bear on each stage of development, the team is more resilient to everyday problems like illness and ultimately the team will be able to self-organize better to account for members strengths and weaknesses.

A few days ago I posted a quote from the Hearthstone developer Eric Dodds about how great it is to work with cross-functional people on a team because everyone is involved and that leads to a better game. But why is that so? I think several things come into it:

Shared Vision – The team builds and truly owns the vision for their work, rather than a few team members doing it in isolation. This means everyone is on the same page building something they feel ownership of. It’s a massive boon to motivation and team building. The team has a purpose and work becomes more enjoyable. It also cuts out a lot of the communication problems caused in trying to articulate ideas namely that it’s very easy to miss important things out when translating ideas to text and the text is easily misinterpreted. Chinese-whispers on paper.

Autonomy – Individuals are no longer locked in a box and are allowed to grow as their talents allow. There has been a lot written on the power of autonomy as an intrinsic motivator.

Increased Development Velocity – Many hands make light work, when free of their box people will do what needs to be done to finish their vision rather than making work to fill their time. The more work on the actual product that will fit into the time and budget requirements the better it will be. Particularly if people are invested in making it great. Increased velocity also brings with it an sense of accomplishment.

The other place to look for the success of this approach is with Indie games. Necessity drives people to be generalists. You can’t really be an Indie game designer unless you can actually make something. Likewise just being able to program a kick ass piece of software or make awesome art doesn’t get you very far unless you are willing to work on a contract basis. These days awesome tools mean generalists can get 90% of the way to making some very complex products and only need to bring specialists in for certain tasks.

The take-away from this is that you are hurting the agility of your company or project if you have an over-reliance on the concept specialization and aren’t encouraging teams to work collaboratively to spread knowledge and skills.

It’s fantastic to have a game where everyone on the team is involved with multiple aspects of the game; we’re already a team of generalists, with designer-artists and programmer-designers and the like, but the small team fosters an environment where everyone gets intrinsically involved in everyone else’s business, and I think it made for a better game.

I was speaking about prototyping at a conference in Finland and got asked a question that left me fumbling for an answer.

“How much of my budget should I spend on prototyping?”

I’m insulated from budgets at work and don’t consider them at home. My pretty lame answer to being put-on-the-spot was that I didn’t really know and that I personally consider prototyping an incredibly important part of making anything so I would earmark a lot of budget towards it. It’s a pretty bad answer in hindsight because the most expensive parts of game development by far are content creation and marketing. Trying to place a dollar value on something like prototyping is hard as the value added is mostly a reduction in project risk. Asking “how much time and therefore money did we save by not doing something?” is equivalent to “how long is a piece of string?”

To me the value of prototyping is two-fold.

Value to the Individual

In games we’re seeking a to produce experiences that elicit emotions, the most common being enjoyment of something fun. There is no real way of knowing in advance if a game idea is fun or not or if its something you really want to make. Making videogames is an act of creation and it is a craft. Other acts of creation follow a similar pattern. Writers need to write a lot to get good at writing. Painters need to paint a lot to get good at painting. You need to knit a lot to get good at knitting. To get good at making videogames you need to make a lot of videogames. The more you explore and make things the better refined your process and skills become. You also gain a great deal of knowledge and experience which you can use as future shortcuts. Prototyping is essentially the act of making the core game experience or a feature as quickly as possible to see if it’s good or not. It follows that prototyping lots of small games, systems and other ideas is a great way to get better at making games.

Having a large portfolio of prototypes is also a great way of demonstrating a variety of skills to potential employers.

Value to the Business

As I said above the main business value of prototyping is a reduction in project risk. Early post-Waterfall methodologies noticed this for example Boehm’s Spiral Model. It’s should be pretty clear to most people that the first version of pretty much anything is terrible in at least one aspect. In the Spiral Model development doesn’t move through a series of stages ala Waterfall but in a series of iterations that result in a series of prototypes before the product is finally put into production. It recognizes that we can’t effectively reason about an idea without a concrete implementation. Project risk can be mitigated with prototyping in a few ways:

- “Fail fast” – Today’s trendy business phrase. But as above there is real value in realizing early that you shouldn’t do something and not wasting resources chasing it in a heavyweight fashion until your realize it is unworkable or rubbish.

- Improving communication – Ever sat in a meeting room to discuss a design document and found you spent the entire meeting squaring away different interpretations of the doc? Text is a horrible way to communicate ideas about a game which is a complex, interactive, aural and visual experience. You can see this in the drift as a younger generation rely on Let’s Play videos more than traditional journalism to make purchasing decisions. Simply watching someone play the game tells you more about it than the reviewers opinion of the experience put into print. The same is true for prototypes, they give a concrete entity for demonstration, reasoning and discussion.

- Expose unknowns – Upfront design has long been fraught if you only have a muddy picture of your requirements. Nothing can get much muddier than games where the game at the end can often bear little resemblance to the initial idea. Prototyping at least gives you some insight into what the unknowns will be.

- Avoiding “Sunk Cost” – Avoiding the sunk cost fallacy is at its heart not “throwing good money, after bad” or better still knowing when to kill a project despite having a large amount of money invested in it. Developing concrete prototypes makes evaluating the project much easier.

There is also less tangible value added by keeping prototyping in mind. Businesses are beginning to realize that like a server a person can’t run at a hundred percent capacity without loosing overall efficiency over time. Google made the practice famous but a whole variety of companies are letting employees take charge of a percentage of their work time. For example here at CCP on the EVE project we have twenty percent of our working time available for our own projects. It’s the lowest priority work and entirely optional but it’s there and people use it. Beyond providing a buffer for work tasks that take longer than expected it also makes sure teams aren’t burning themselves out. Encouraging prototyping in this time has a couple of benefits. Firstly the business can discover new business opportunities. For CCP that paid off in a big way with EVE: Valkyrie, even if the initial prototype hadn’t been greenlit into development the visibility raised must of been worth many times more in equivalent marketing spend than it cost the company. Granted the core team that made the initial prototype eventually put way more of their own hours into the project but it’s an interesting example of these things “going wild” and surprising the executive staff. Secondly it helps keep people motivated, no one enjoys drudge work but it often has to be done and spending a few hours each week on something you find exciting is good compensation.

Whilst I wouldn’t expect prototyping efforts to spin off new products regularly they do also give the business a bunch of products with some ground work already done they could evaluate and invest in when it comes time to do so. Hopefully this sort of culture will experience less downtime between projects and less project risk by having to start all the investigation of multiple ideas from scratch.

Finally the value added by each individual getting better at their craft is not only a tangible benefit to that individual but to the company as a whole.

How much of my budget should I spend on prototyping?

Let’s revisit the initial question. Prototyping is a tool to investigate and conquer the unknown so the budget requirement comes down to how well you really know what you want to make. I guess the answer I’m shooting for now is make it a significant part of your process, especially at project conception and during pre-production.

It’s time to wrap up the CCP Game Jam posts with a round-up of the remaining games that were made during the jam.

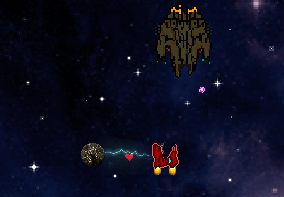

First up is the eminently beardy Team Clean Shaven who made two games, or three if you count that one of them was split into two separate executables! The first game H.E.A.R.T. was a schmup made in Unity divided into a general vertical scrolling kill all the things game with the second portion being a specific example of a boss battle. The second game they made One Heart to Make was a pattern matching board-game where up to three players as the club, diamond and spade suits battled to reassemble the heart.

Team Heartless very nearly missed out on the Player’s Choice Award with their take on dating in Heartbreak Date. The game consisted of a few minigames all bonded together to make a terrible dating experience. Players get the opportunity to try to attract a waiters attention, try to stay awake whilst your date is talking and a brutally hard game where you have to say the things that your date is thinking about to feign interest. Their game was also very well put together and a special shout-out has to go to Scott Rhodes’ excellent audio including some inspired voice acting.

Last but not least is Team Pheasant Preserve who quite possibly made the first game ever in Confluence. Ultimate Wingman Simulator: Heartbreaker Edition – Tragic Confluence was a piece of well written and illustrated interactive fiction. The game was initially intended to be a point-and-click adventure but time pressure forced them into a different format.

After the teams had finished their 36 hour marathon development effort we had a Showcase event in the office canteen. The rest of the Reykjavik office was invited along to try out the games people had made whilst enjoying a few beers. During the Showcase we ran some voting with each person able to vote for one project to be the Player’s Choice. Team Yellow Brick were the winners with their take on the Land of Oz. You play the Tinman desperate to get a heart in order to win over Glinda and have her accompany you to the Valentine’s Day Ball. Each stage of the game sees the Tinman attempting to steal the heart of one of the other famous characters from the Wizard of Oz as part of a bloody rampage. Between each stage the story unfolds via some rather lyrical poetry. The game was built in HTML5 using the Phaser framework.

Tomorrow I’ll wrap up with a quick look at the rest of the games.